Category: Social Media

How to Use Facebook for Book Promotion

Given that my day job is head of content for Social Media Today, meaning that I essentially get paid to analyze and write about social media and digital marketing trends, I often get asked by fellow authors about the best ways to use social media for promotion.

And the answer is that it’s not easy – social media is not a quick-fix that will suddenly get you millions of fans overnight. But it can be hugely valuable, and increasingly so, given the rising use of social platforms, particularly in terms of product recommendations and discovery.

No matter how you look at it, you kind of have to do it, at least in some form. Realistically, most of us are still working to establish a fan base, and we need all the help we can get – and social media can definitely be a help in this respect.

So, in a previous post, I went over how authors can utilise Twitter for book promotion – and that seems like a lot of work, right?

But you don’t need to bother with Twitter, it’s only got a fraction of the users that Facebook has – everyone and their dog (literally in some cases) has a Facebook profile.

Facebook is where it’s at, where authors should really focus their promotional efforts. Right?

Well, kind of, depending on how you look at it – and really, what works best for your audience.

And that’s an important distinction – it doesn’t matter which platforms you might like more or less, it’s where your audience is at that you need to be.

So how can authors make best use of Facebook? Here are some pointers.

1. Create a Facebook business profile

First off, you can’t be using your personal profile for book promo.

Your personal profile is where you share updates with your family and friends, where your personal connections can link up with you. You don’t want to mix up your book fans and personal connections.

You also need a business profile to run Facebook ads, which, as we’ll cover, you’ll probably want to do at some stage.

Facebook business profiles are where you can showcase yourself as a writer, and if you’re seriously looking to promote your work on the platform, you need one, bottom line.

You can set up a set up a Facebook business Page by heading to this link:

Select ‘Community or Public Figure’, then enter your name and your category (‘Author’) and you’ll be on your way.

Note: You’ll also need to set a Facebook Page URL name at some stage (i.e. https://www.facebook.com/andrewhutchinsonauthor/), or Facebook will just give you a generic one. This is not a huge deal, but it can make your Page easier to find – and it looks better.

You can edit your Page name in the ‘About’ section at the left of your Page screen.

2. Share updates that relate to your writing life

What I mean by this is, don’t share the same updates on your business page as you would on your personal profile.

Your readers, and target readers, don’t care about your cute cat or your holiday snaps – unless, of course, they directly relate to your work. Keep it confined to your book-related news, and create specific posts for your Facebook Page. Don’t cross-post. Each platform is very different. Create unique updates, related to writing, for your Facebook Page.

Tim Winton is a good example of this.

Tim shares content related to his work, articles he’s written, publishing news – basically, nothing’s off-topic, and that’s important, because it will ensure that those who do follow your writing page get updates about your writing, which is what they’re following you for.

3. Don’t overpost

One of the key rules to stick to on Facebook is ‘don’t overpost’.

Your fans are following your Page to keep in touch with your latest news, but they don’t need ten updates a day cluttering their feeds.

As noted earlier, people generally use Facebook to stay in touch with friends and family – along with some brands and celebrities in between. Go overboard, and you’ll run the risk of them unfollowing – and what’s more, you really don’t need to post too much.

Sure, you want to maintain activity, and ensure that you stay front of mind with potential readers. But you’re not releasing a new book every day, there’s no urgent need to keep them informed of every single thing in order to guide them towards the local book store.

For most authors, Facebook is about maintaining connection with your readers, as opposed to hard selling. Keep them updated with a consistent stream of news, but don’t overdo it.

Matthew Reilly is a good example of this.

Reilly has over 61k Facebook followers, and he regularly sees high engagement on his posts. Of course, Matt benefits from his established fan base, which you likely don’t have, but his approach to Facebook is consistent, measured and about right for maintaining connection with his fans (note too that he also recently launched a new YouTube channel, showing that even the big players need to maintain activity, and move with the times. If you are going to record video content, however, it’s better to upload it to each platform direct for optimal performance, as opposed to linking off to another platform, as Matt has done here).

Matt posts to his Facebook Page once per week, in general, ramping that up around book launch dates/events. That’s a pretty solid guideline to follow – and that’ll still give you plenty of time to, you know, write stuff, as opposed to spending your days maintaining your social streams.

Also, a few notes here on Facebook’s mysterious algorithm.

Whenever you’re talking about Facebook posting practices, someone always arcs up with their sudden advanced PhD in machine learning, and starts talking about how Facebook’s algorithm works and defines reach.

There are a lot of misconceptions here, but the key pointers you probably need are:

- While you shouldn’t overpost, every one of your followers won’t see every one of your posts anyway. Facebook’s algorithm will show your posts to a selection of people who follow your Page, and then, if they engage with it, it’ll show more. The system is built to maximize engagement, so if your posts are generating likes and comments, more people will see them. This means that sparking engagement with your updates is important, but not more important than maintaining connection to your author brand (i.e. posting relevant stuff).

- This also means that, theoretically, you can post more often, as it’s not like you’re going to flood your audience anyway. I would advise against this, but you could post several times a day and it wouldn’t necessarily be a major problem – though it probably won’t help much either.

- The performance of your past posts does relate to your future updates – so if you have a post that goes viral, your next post after that will subsequently also see a reach boost. Some try to utilise this by posting trending memes and inspirational quotes that will generate likes, even if they aren’t related to their broader branding goals. Facebook knows that people do this, and its system will correct for it if detected. It also clutters up your Page, turns off real fans, and even if it does expand your reach, it likely won’t help you connect with people who will actually purchase your books. So, you can try this, but a longer-term, consistent approach will, eventually, lead to better results.

- There’s a rumour that Facebook’s algorithm gives a reach boost to posts which include words like ‘engagement’, ‘married’, ‘new job’, ‘big news’, ‘baby’ and various others. This is – or at least was – true, but it’s also not likely to be a major help (Facebook reportedly implemented this after CEO Mark Zuckerberg complained that he missed a post from a friend who’d had a baby).

- Hashtags don’t really work on Facebook, which is another reason why you shouldn’t cross-post from other platforms.

- Recency is an algorithm consideration, so it’s worth keeping an eye on your analytics and checking when your audience is active. Post when more people are online, and theoretically, more of them will see it – but it is also worth noting that many brands have also seen good results when they post in quieter times, as there are fewer updates in the stream vying for attention

Basically, Facebook wants to keep people on-site as long as possible, and it does so by showing people more of the content that they’re interested in. Post what people want to see and you’ll be on the right track – but even more than that, post what people who buy your books want to see and you’ll work towards establishing a stronger platform for promotion.

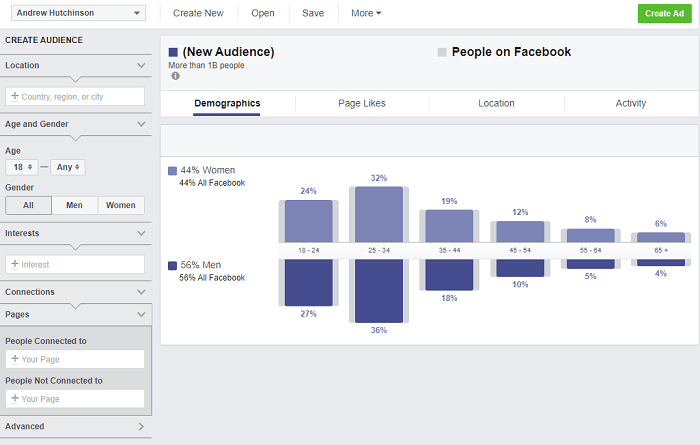

4. Use Audience Insights

Not everyone knows about Facebook’s Audience Insights, which is terrible because Facebook can connect you with so much helpful info, if you know where to look.

If you have a Facebook Page, and you go to this link, you’ll be able to access Audience Insights, which will show you who the fans of your Page are – where they live, how old they are, and other demographic insights.

That’s helpful, but if you’re just starting out, you’re likely looking at an audience of your friends and family, not necessarily your target, book-buying audience.

But here’s where it gets interesting – along with your own page, you can also look up other interests on Facebook, including other authors. And along with demographic insights, it’ll also show you what other things their fans are interested in.

So if I look up an author who I like, whose readers I think might also like my stuff, I can check out what interests them, giving me a better profile of my target book market.

As you can see here, I’ve created a new audience of fans of American author Chuck Palahniuk, limited to those within Australia. Now I can see what other Pages Palahniuk fans like, and based on this, I could post more content that ties into these interest areas in order to boost my potential appeal, or I could use them in my ad targeting, which, given Facebook’s advanced targeting options, I’m probably going to use around launch time.

Which is the next point:

5. Use Facebook ads

I know. I know you don’t want to spend a heap.

I get it – we’re authors, and the majority of us are not raking in the cash from out fat royalty checks and movie deals.

I know you don’t have a heap to spend on promo, but given the advanced audience targeting options available, and unmatched potential reach, Facebook ads can be a great option.

As noted in the previous point, you can target your ads to fans of authors whose work is similar to yours, or around common interests that you find among their fans.

As you can see here, for this (mock) campaign, I’m targeting an audience of people who are interested in movies and TV shows which I think are kind of similar to the themes of my novel ONE. You’ll also note that I’ve also excluded people who are interested in book genres that are not related to what I write.

You should opt for in-feed ads – no one checks those right-rail updates – and if you have a visual ad, you can also include Instagram Stories placement (though I would advise that you create specific campaigns for each platform).

It’s not an exact science, and you should probably run a couple of ad variations to see what works best. You can then stop the ones that don’t produce (after, say, a week) and re-allocate your budget to those that are gaining traction.

You should also optimize for awareness where possible, as you want to make as many people as possible aware of your book, as opposed to driving viewers back to a landing page, as such.

Use a page on your website, or your publishers’, and see what results you get. It may be hard to accurately measure, as you won’t know whether seeing your ad results in a subsequent book store visit. But with fewer bookshops, and fewer festivals and media opportunities, awareness is key.

Facebook ads can be great for this.

6. Get More Page Fans

But hang on, I hear you say, all of these tips relate to functionally operating a Facebook Page, but if you don’t have any followers, you’re talking to no one.

So how do you build your audience in order to maximize engagement?

Getting more people to Like your Page takes work, but here are a couple of options you could consider, depending on how hard you want to push your promotions.

- First, you’re going to get your family and friends to Like your Page, which will give you a starting point. This is not always ideal, because your family and friends are likely not your ideal target, book-buying audience (which can skew your Page data), but you can prompt them to share with friends, which will give you a base to work from. And either way, they’re going to Like your Page anyway. Best to try and use it to advantage

- If you have an email list, send out a link to your Facebook Page, or if you’re in any writers’ groups, clubs, organizations and they have an email newsletter, maybe query them to see if they might be able to include a link

- Share the link to your Facebook Page on your other social media profiles if you have them

- Make a list of Facebook book groups that might be interested in your book, then contact the admins offering to do a Q and A or similar event. You won’t hear back from all of them, but it may be another avenue to boost promotion, particularly around launch date (note that around half of all Facebook users are active in at least one Facebook group)

- You could consider running a giveaway to help promote your book. There are specific rules around Facebook giveaways, but you are allowed to ask people to Like your Page to enter a competition, which could be another way to boost your following.

- Blogging and guest-blogging are additional ways in which you can help get the word out, and make more people aware of your broader online presence.

- It’s worth leaning on writer friends to ask them to Like or share your Facebook Page, particularly if they’re established, as that will help get your name in front of more readers.

- Add social media buttons to your website, so people can easily find your related profiles.

- If you post a picture from an event, make sure you tag the host and any other authors in the image, which can lead to re-shares and more exposure.

Other notes

- Visuals are important. Still image posts perform better than basic text updates on Facebook, while videos can generate a heap of engagement. As such, a video preview of some kind could be worth the investment, while Facebook Live Q and A sessions are another thing to consider

- Quizzes and polls also generate engagement and can be tied into the key themes of your book

- Tara Moss shares some great visual posts, if you were looking for examples, while she also uses the slideshow option for her Facebook Page background image, enabling her to showcase more of her work. This is a good option – but if/when you do update your profile images, keep your phone handy so you can ensure that it looks good on mobile and desktop devices

- Also, ensure all your profile details are filled out, and that you have the ‘Author’ Page category selected (this will help interested people find your Page)

That’s the basics of an effective author presence on Facebook. There are, of course, other elements you could consider – like Facebook Stories – but as a jumping-off point, this outline should position you to help build an engaging, effective presence to help you maintain connection with more readers.

How to Use Twitter for Book Promotion

I came across this tweet recently, which captures a common frustration for authors on Twitter:

Here’s the challenge:

Tweet a picture of cat wearing a tuxedo: 5,875 likes, 159 RTs, and 3,545 comments.

Tweet about the book you spent years writing: 2 likes, 0 RTs, and 1 comment (from a sexbot).

How do we fix this?

— chad (@writingiswar) January 19, 2020

I actually get asked about this quite a lot – my day job is head of content for Social Media Today, so I essentially get paid to analyze and write about social media and digital marketing trends. Combine that with the fact that I’m an author and logically, I should know how to make best use of Twitter for authors and book promotion, right?

And I do, but what I normally add to this when I do respond to such questions is ‘but you’re not gonna’ want to hear it.’

Why is that? Because it takes time, it takes effort – time and effort that writers would generally rather be expending on, you know, their actual writing projects.

The truth is, if you want to utilize Twitter as a promotional tool for your books, then you have to first build your platform, and earn the right to pitch your latest work to a receptive Twitter audience.

How do you do that? Here’s an overview of a few options you could consider.

1. Build a Platform Around an Issue

Now, to clarify, building a ‘platform’ in this context relates to establishing a following of people who are interested in what you do – and ideally, what you write about. If you can establish yourself as an authority or leading voice within a certain niche, then people will seek more information on that topic from you, and in that way, you can utilize Twitter as a promotional tool because your audience is interested in the topic and what you have to say about it.

To do this, you need to get involved in the conversation. Let’s say you write about climate change in your work – you would start by following the relevant leaders in that field and engaging with them, and within the replies on their tweets, wherever was relevant. That, over time, will get your name in front of other people who are interested in the same – so you’re gaining exposure to a group of Twitter users who are interested in that topic.

The more you can get involved and build your profile – through tweet engagement, sharing your own posts, sharing others’ relevant content, etc. – the more you’ll become known in that niche, so when you do publish your book, which relates to climate change, the audience that you’ve established will now be more likely to engage with it.

Author Clementine Ford is a good example of this – Clem writes about gender equality and feminism, and sees a lot of engagement on her tweets as a result, including her book announcements.

Attn parents and school librarians! .

I’ve been waiting to announce this for AGES. I am so thrilled to announce that I’ve just signed a contract with my darling publisher @allenandunwin… https://t.co/gvLNoQhPhL— Clementine Ford 🧟♀️ (@clementine_ford) March 22, 2019

Clem has built a Twitter audience of more than 132k followers, and while not every single one of her tweets is about her focus subjects, more than 90% of them are, and combined with her newspaper articles and media appearances discussing the same, Clem has built an audience which knows what they’ll get, and will therefore be a likely market for her books.

But this approach does get a little murky for fiction authors, whose body of work is likely not dedicated to a few key subject areas.

As an example, author Alice Bishop released a collection of short stories last year which looks at the Black Saturday bushfires in Victoria – Bishop lived in one of the bushfire hit regions, so has first-hand insight on the destruction.

slightly terrified but also excited (!): join me at @ReadingsBooks carlton tonight (6.30pm start) for the official launch of A CONSTANT HUM.

our friends (@Robdolanwines)—who helped after black saturday—will be there w/ beautiful wine. the great TONY BIRCH will be speaking too. pic.twitter.com/rbnVbHWBnc

— Alice Bishop (@BishopAlice) July 12, 2019

Alice hadn’t established herself as an authority on bushfires beforehand (which, as a fiction author, wasn’t her aim), but over time, she has been able to build more of an audience on Twitter based on bushfire coverage – sharing articles about the most recent fires, engaging with people from impacted communities via tweet, gaining a following as a someone who writes about fires and their aftermath.

Focusing on a subject has arguably helped Alice build a more engaged audience on Twitter, but that same audience likely won’t be as beneficial if Alice’s next book isn’t related to the same.

In this sense, topicality can help in your promotion efforts, but it’s also likely too confining for fiction authors, who switch topics significantly from one publication to the next. If you dedicate yourself to one key area, it will definitely bring promotional value on Twitter over time, through establishing yourself as an expert in that arena. But this may not be an effective approach for novelists.

Consequently, this is also a problem I see with modern publishing approach to the same, where they seek a topical angle on your work, as opposed to focusing on the story and writing itself. For one, it feels like, over time, literature is merging too much into activism, which can alienate a large audience subset (people are already inundated with politics in their social media feeds every day – the last thing they want is to be preached to in their recreational reading habits). For another, and as noted, it pigeonholes writers into certain topic streams.

But then again, in order to get press coverage, and maximize promotional value, maybe they need a topical angle to pique the interest of relevant editors.

Regardless, if your writing regularly covers a specific focus area like this, this is one way in which you can use Twitter to establish yourself. And once you’ve built an audience of people engaged in the subject, they’ll also likely be interested in your books.

2. Build a Platform within the Writing Community

But what if you don’t write about a specific topic? Another approach you could take is to build a platform within the Twitter writing community, which can connect you to other people who are interested in writing – and by extension, readers who are interested in their work.

To clarify, this doesn’t mean that you should connect to every writer you can and blindly re-tweet each others’ latest book news. Doing this will likely see you end up talking amongst yourselves, and promoting your latest books to no one other than other writers, who are not your target audience. It can be great, and beneficial, to connect with other writers on Twitter for advice, support, etc. But in a promotional sense, it likely won’t help you a heap.

This is where you need to differentiate your purpose for Twitter use, and consider the audience that you ultimately need to reach.

Building a platform within the writing community for promotion more relates to connecting with other authors, with a broader view to utilizing those connections in order to reach more potential readers – i.e. their audience of readers who are already following them.

But this takes a lot of time and effort – Angela Meyer is a good example of this.

The launch of #ASuperiorSpectre @ReadingsBooks on Thu was absolutely glorious. Thank you to EVERYONE who came ❤️ Special thanks to @justine_hyde for her beautiful launch speech & to @thebooksdesk for flying from Germany! A few pix w pals 🔽 @ventura_press pic.twitter.com/wtr4yoI9f6

— Angela Meyer (@LiteraryMinded) August 12, 2018

Angela has spent literally decades building her profile within the literary sector, first starting as a book blogger, then as a publisher, before finally becoming an author herself. Through all of this, Angela has established connection with a heap of authors and publishing types, who themselves have their own followings of interested readers. When Angela does tweet about a book launch, many of the people who re-tweet it are established authors and publishing folk.

That gives Angela not only reach to writers, but importantly, reach to more readers – but again, Angela has built that platform through years of work, establishing a network on Twitter of people who are now willing to advocate on her behalf.

Angela does also share content around gender identification, which is an element explored in her work, so she also uses topicality to broaden her platform. But an argument can be made that by establishing stronger ties within the literary community, you’ll stand a better chance of utilizing Twitter for promotion.

See also podcasters like Kate Mildenhall and Katherine Collette, who both see higher engagement on their tweets as a result of their established identities within the writing community, and subsequent connection to high profile authors who will be more likely to help them with re-shares and distribution on their announcements.

‘But isn’t that just authors sharing with each other, which you just said isn’t effective?’

Kind of, but in this way, you’re utilizing bigger name authors, those who already have established followings of willing readers. Now, you’re not only getting exposure to other authors, but importantly, the book-buying public.

It’s also worth noting here that with Twitter working to show more users tweets that they may be interested in, even Likes can have the same effect as re-tweets. Twitter’s algorithm will display a selection of tweets liked by people you follow in you in your feed – so even if you can get a prominent person in your field to simply like one of your tweets, there’s a greater chance of exposure to a reading audience.

3. Build a Platform Within Your Niche

Focusing on a single topic area can be restrictive, and building momentum for a podcast or similar in order to establish a place within the mainstream lit community takes time.

So what are your other options?

Establishing an audience within a specific niche, related to your work, is another way to maximize Twitter for promotion – though again, it doesn’t come easy.

In this way, you could tweet about things that interest you in, say, the horror genre in order to establish connection with like-minded users. You could share Hollywood news, posts about the horror writing process, engage with the community around the latest content. And through this, ideally, you can build your profile among people who will eventually also be interested in your stuff.

Author Maria Lewis is a good example of this:

Cover reveal time…my FIFTH book The Wailing Woman is coming out this November. It follows a teenage banshee as she navigates the prickly supernatural world of Sydney, Australia https://t.co/a7KCm28Gpj pic.twitter.com/wivrnRti9V

— Maria Lewis (@moviemazz) June 18, 2019

Through her tweets, Lewis shares her interests in film, literature and the arts more broadly, which largely relate to the themes of her own books. Really, Lewis uses a combination of all three of these approaches – her books touch on topical issues, she hosts a podcast (and has previously been a host on SBS TV), and she shares a consistent tweet stream of the things that she’s interested in, further connecting her with like-minded Twitter users.

But again, this didn’t happen overnight. Lewis has also worked for years to establish herself as a commentator, through her work as a journalist and presenter, and she’s now earned an audience of like-minded fans who engage with her tweets.

But it is another approach – if you write in a specific genre, you can use your tweets to connect with readers who are interested in the same.

And the more you can build your brand, tweet-by-tweet, the more you’ll be able to connect with an audience that will be increasingly receptive to your own content.

4. Just Don’t Worry About it

So, all of these approaches take a lot of work – but it also worth noting that you don’t have to use Twitter as a promotional vehicle.

Many successful authors don’t even have a Twitter presence – or some, like American author Jesse Ball, just share random images or cryptic messages for fans.

— Jesse Ball (@llabessej) January 13, 2020

Many authors also just share what they like, regardless of themes or ideas, and still do fine. While you can use Twitter as a means to promote your work, it’s not essential – but if you are getting frustrated, as with the example at the top of this post, with the lack of traction for your book tweets, it’s worth considering how those who do see significant engagement on their book tweets have worked to establish their presence.

‘So why don’t you do this?’

Yeah, I don’t personally tweet along thematic lines, or even along book-specific lines more broadly. That, in my case, is due to conflicting professional interests – I’m the head writer for Social Media Today, which is where the vast majority of my Twitter followers have come from, so if I share more fiction-related content, it likely won’t get a heap of traction. As outlined in the examples above, I haven’t established a platform for book promotion specifically, and because I’m in between these two worlds, I don’t personally make Twitter a huge focus – though I do use it to connect with other authors, which I find hugely beneficial.

In terms of other pointers, I would add these tips, based on examples I’ve seen:

- Don’t just re-tweet – ever – Well, maybe not ever, but if you’re looking to establish yourself in a specific area, you need to be including your opinion when you share things. Blank re-tweets likely won’t help improve your tweet engagement (as your followers will be getting these in their feed with no context) and won’t further establish you as a person of interest in that field. Better to share with your own thoughts included. A notable exception to this is if the tweet is about you/your work – if a high profile person says your book is great, then you re-tweet that for sure, as this does work to further underline your brand through external endorsement.

- Follow-for-follow is outdated – Yes, you want to have lots of followers, but followers who are just doing so in order to boost their own audience counts won’t engage with your tweets – and won’t buy your books.

- Don’t follow trends – Sure, tweeting a cute cat picture or an inspirational quote might inflate your tweet metrics, but will it help connect you with people who are actually going to buy your book? Making a funny video might get more engagement – but if it’s not actively working towards building your presence in your key area of interest, and linking you through to that audience, it’s probably not really helping. Sharing insights into your personal life is fine, but keep in mind your broader strategic focus – if indeed you are aiming to use Twitter for max promotional value.

- It’s not the algorithm – Some have suggested that it may be worth sharing some high-engagement tweets, even if they’re off-topic, in order to ingratiate yourself with Twitter’s algorithm. That way, the theory goes, when you share your subsequent promo tweets, you’ll get more reach. That’s not really a relevant consideration on Twitter – on Facebook it is, to a degree, but Twitter’s algorithm is more aligned to each individual tweet, and any reach boost you might achieve is likely not worth the effort (worth noting, too, that Twitter is working to better align itself around topics, further lessening any such impact).

As always, some will read this and respond with ambivalence. ‘But I like re-tweeting book launch info and connecting with fellow authors, and that works for me’. And that’s fine, if you’re happy doing what you do, then all good. But let’s face it, if you were truly satisfied with the results you’re seeing, you wouldn’t be reading this.

The bottom line is that there are ways to utilize Twitter to promote your work, but the pathway to true success is not easy. If you’re looking for a quick fix, a quick-hitting way to get the message out about your latest work, Twitter probably isn’t the best option.

Twitter is a brand-building platform, and as such, you need to take the time to build the right audience, those who will eventually be receptive to your promotional messaging.